I got folderclone installed and set up with the quickstart.py file and credentials.json file. Trying to use python in windows freaking sucks. Should make my deadline.īeen working at this for a while now and got stuck. Managing about 2TB/day using this method. Rclone sync -progress -transfers=15 -fast-list -max-transfer 745G -log-file /path/to/sync.log SOURCE:STUFF Service1:STUFF Running the following command for each service account in a bash script: # SERVICE 1 # I linked several service accounts to the same Team Drive but am only using like 3. Service_account_file = /path/to/creds.json Even though I can run it in a few minutes now lol.Ībandoned all scripts. Still stuck using the 750 GB/Day regular transfer. WARNING: You do not appear to have access to project or it does not exist.ĮRROR: () PERMISSION_DENIED: The caller does not have permission ERROR: () PERMISSION_DENIED: Service accounts cannot create projects without a parent. Set all accounts to have edit access and it's still struggling. Thought it would work but don't know why I'm getting permission errors. Raise HttpError(resp, content, uri=self.uri) Resp = iam.projects().serviceAccounts().list(name='projects/' + project,pageSize=100).execute()įile "/usr/local/lib/python3.7/dist-packages/googleapiclient/_helpers.py", line 130, in positional_wrapperįile "/usr/local/lib/python3.7/dist-packages/googleapiclient/http.py", line 856, in execute When running, I get the error below: Enabling servicesįile "gen_sa_accounts.py", line 233, in serviceaccountfactoryįile "gen_sa_accounts.py", line 33, in _create_remaining_accountsįile "gen_sa_accounts.py", line 93, in _list_sas This script has been the most consistent. I attempted to retry and the URL auth screen redirects me to localhost instead of working now. I received a 403 error when it attempted to get the json files for the service accounts but the accounts were created. I git cloned the reop and attempted to run the multimanager.py file ( $ python multimanager.py) but it returned nothing.ĭespite being in Chinese, I got the farthest with this script. The Enable Drive API Link does not work so I enabled it manuallyĮverything installs fine but when I attempt to run $ multimanager interactive, the command is not valid. I've used the following methods to attempt to do this and have run into problems each time:Īnything that required the Python Quickstart from Google:

When I copy the URL from my headless VPS and open it in browser on my local machine I am either redirected to localhost which does not verify or I get an invalid result

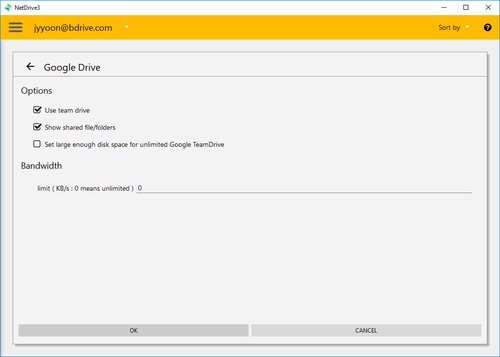

Changed to AutoRclone as it’s better documented. Tried to use folderclone but ran into issues. I thought that Drive to Drive was exempt? Any way around this since I now have this VPS at my disposal? Answered: Use service accounts Spun up a Google Compute VPS (Linux) and am using this command: rclone copy -drive-server-side-across-configs=true -progress -drive-chunk-size 64M -fast-list -ignore-existing -size-only -verbose -transfers 10 "Old:Stuff" "New:Stuff" Help me rclone community, you're my only hope. I'm new to rclone as of this month and with the deadline fast approaching have barely made a dent in the content (100/100 up/down bandwidth). Moving content to a GSuite Business unlimited account (but cannot share it directly as I have no access to source)įiles are on Source Drive which there is no access toįiles are shared to Middle Drive that has rclone set upĬopying files to Destination Drive from Middle DriveĬan I have rclone automatically create a new folder (which I can share) and stop when it reaches a certain size? Say 10GB and then have a separate process move the content from this shared folder to my destination account (which would then allow the folder to refill).įrom what I'm aware of, this would allow for a GB/s transfer rather than MB/s and I could finish in days instead of months. I do not have direct access to the source account any longerĬontent is shared with a secondary stock gmail account with 15GB of storage which I have rclone set up on Source account has 50TB of content and is being terminated at the end of the billing cycle w/o the option to renew I'm looking to find a faster way than what I'm currently doing (a simple copy that required down/upload).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed